Principles of test validation

Implementation of a new medical diagnostic test is a complex process, with test validation an integral component that ties together many aspects of test implementation. Performing a test validation can be daunting, but it doesn’t have to be if you follow a structured best practise approach. When trying to conform with standards such as ISO15189, the wording can cause some consternation when it describes the requirement for test validation to be “extensive as necessary”. This article will attempt to provide a best practise approach to test validation by outlining a step-by-step process that can be easily adopted by the laboratory.

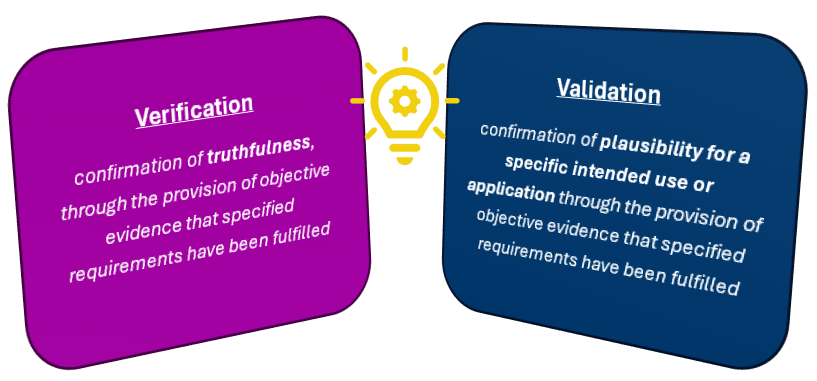

Firstly, lets touch on the difference between test verification and test validation. The ISO15189 definition of verification is “confirmation of truthfulness, through the provision of objective evidence that specified requirements have been fulfilled”. This is where a test already has established performance criteria (the “specified requirements”), such as sensitivity, specificity, limit of detection etc from a manufacturer, and the job of the laboratory is to confirm that this can be replicated on site at your facility. However, the laboratory must perform the test exactly as intended without deviation from stated requirements, such as specimen types, reagents or volumes.

Validation, on the other hand, is defined in ISO15189 as “confirmation of plausibility for a specific intended use or application through the provision of objective evidence that specified requirements have been fulfilled”. This definition replaces the word “truthfulness” for “plausibility for a specific intended use or application”. All this means is that there are no pre-determined specified criteria that exist, and the laboratory must assess the test performance against a reference test and establish performance criteria that show the test is fit-for-purpose. The laboratory is required to validate examinations methods derived from the following sources, as per ISO15189:

Laboratory designed or developed methods (in-house tests)

Methods used outside their originally intended scope (i.e. outside of the manufacturer’s instructions for use)

Validated methods subsequently modified

As stated in the last dot point, note that when you modify an already validated test (commercial or in-house) you will not be verifying it against the previous validation but rather re-validating the amended test.

In essence, the purpose of validation is to show that the accuracy of a test meets the diagnostic requirements. While ISO15189 does not define how to do test validation it does state that validation needs to be as “extensive as is necessary”. It is therefore up to the laboratory to decide what performance characteristics are appropriate, how much is enough, and to be able to explain to assessors your approach and reasoning that what you have done is sufficient. Following a structured approach to test validation can provide your laboratory, and therefore assessors and clients, confidence that your validations are “extensive as is necessary”.

The five stages of test validation include planning, data generation, report writing, implementation and continuous performance monitoring. The test validation process starts with planning to clearly define the intended use of the test. This is followed by generating experimental plans and producing data to support your specific requirements. Possibly the most important stage of test validation is the report writing, where you need to clearly and concisely communicate your findings. Implementation involves ensuring all the factors are in place to successfully start performing the test in a diagnostic capacity, which may include seeking accreditation. Finally, all tests will be continuously monitored over their lifetime. By following these key steps, we will explore how to perform a test validation with a structured best-practise approach.

In the planning stage of test validation, you start by clearly defining the intended use of the test and establish a testing procedure that is fit for the intended purpose. As a part of this stage, you will need to do research and:

Define the test target

Define the methodology, including required reagents and equipment

Determine appropriate controls and workflow

Identify critical parameters affecting performance

Define potential limitations

Perform an assessment of use

Will the measurements be diagnostically useful?

Will the method be sufficiently selective?

Select appropriate performance characteristics for evaluation

You will then also need to define acceptance/rejection criteria. These will be the minimum acceptable values for the performance characteristics you have selected for evaluation. Your minimum acceptable values must be achievable, while remaining relevant diagnostically. There is no point setting the bar so low to ensure they pass that it renders the result clinically unusable.

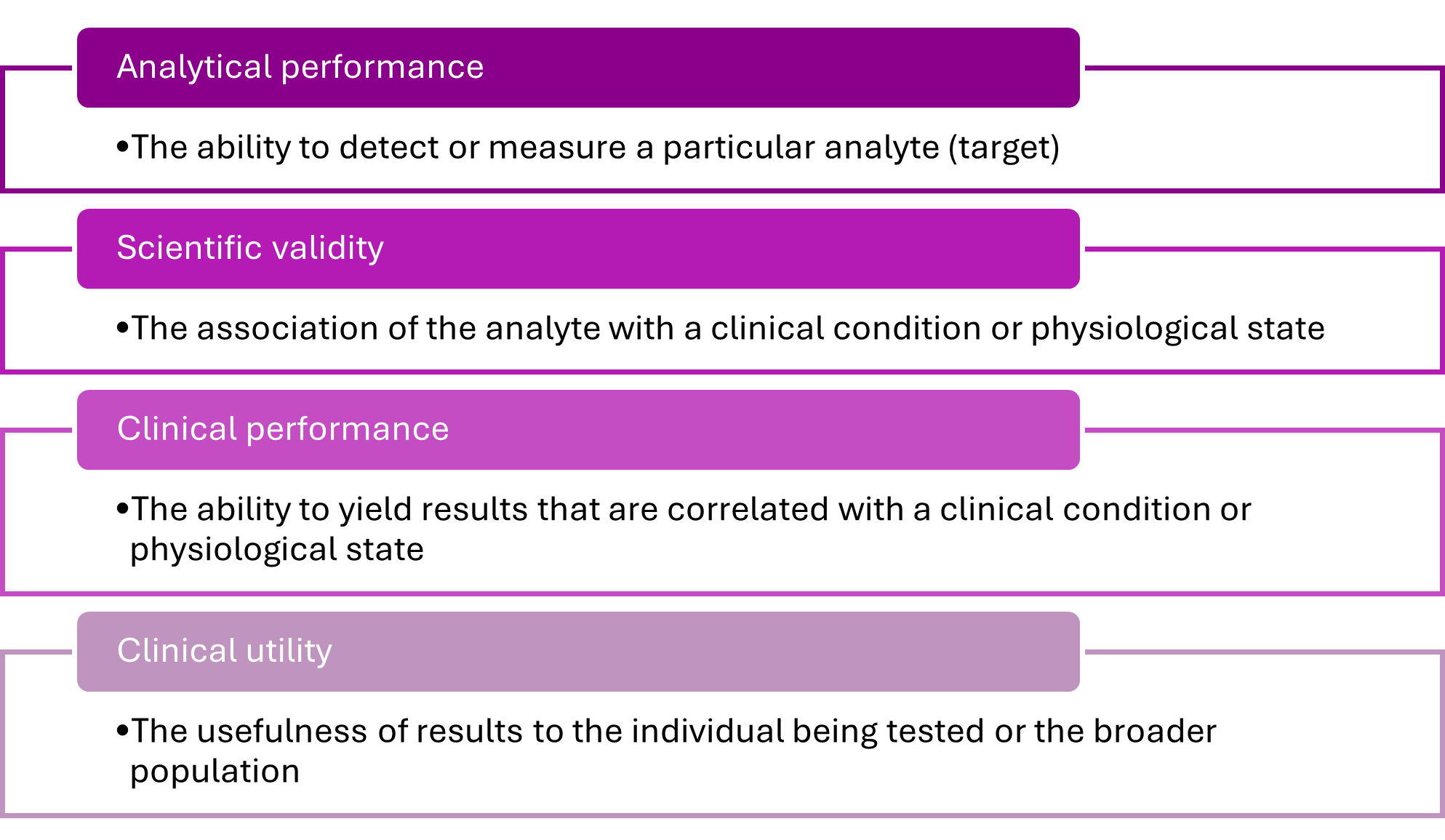

Planning is followed by the doing – data generation. At this stage you will need to ensure staff are trained in the methodology and have authorisation to perform test validation. ISO15189 (clause 6.2.3) requires personnel performing test validations to be authorised to do so, and it is best to have this authorisation documented in some way. The new test will usually be assessed in comparison with a ‘gold standard’ or reference test, however, in the absence of a suitable reference test or reference material, choose the current most reliable test(s) available. The National Pathology Accreditation Advisory Council (NPAAC) provides guidance documents for medical testing in Australia, and in regard to test validation, outlines requirements for analytical performance, scientific validity, clinical performance and clinical utility. Some of these conditions will get addressed during the planning stage when the test is being researched, but analytical performance is addressed in this data generation phase and is the backbone of the test validation.

During analytical performance evaluation, the pre-determined relevant performance characteristics are assessed and can include a long list of possibilities. The performance characteristics chosen should best address the risks associated with the test. Performance characteristics to consider, depending on the test, may include but not be limited to:

Trueness, Precision, and Accuracy

Repeatability and reproducibility

Robustness

Sensitivity and specificity

Limit of detection

Matrix effect / interfering substances

+/- predictive values

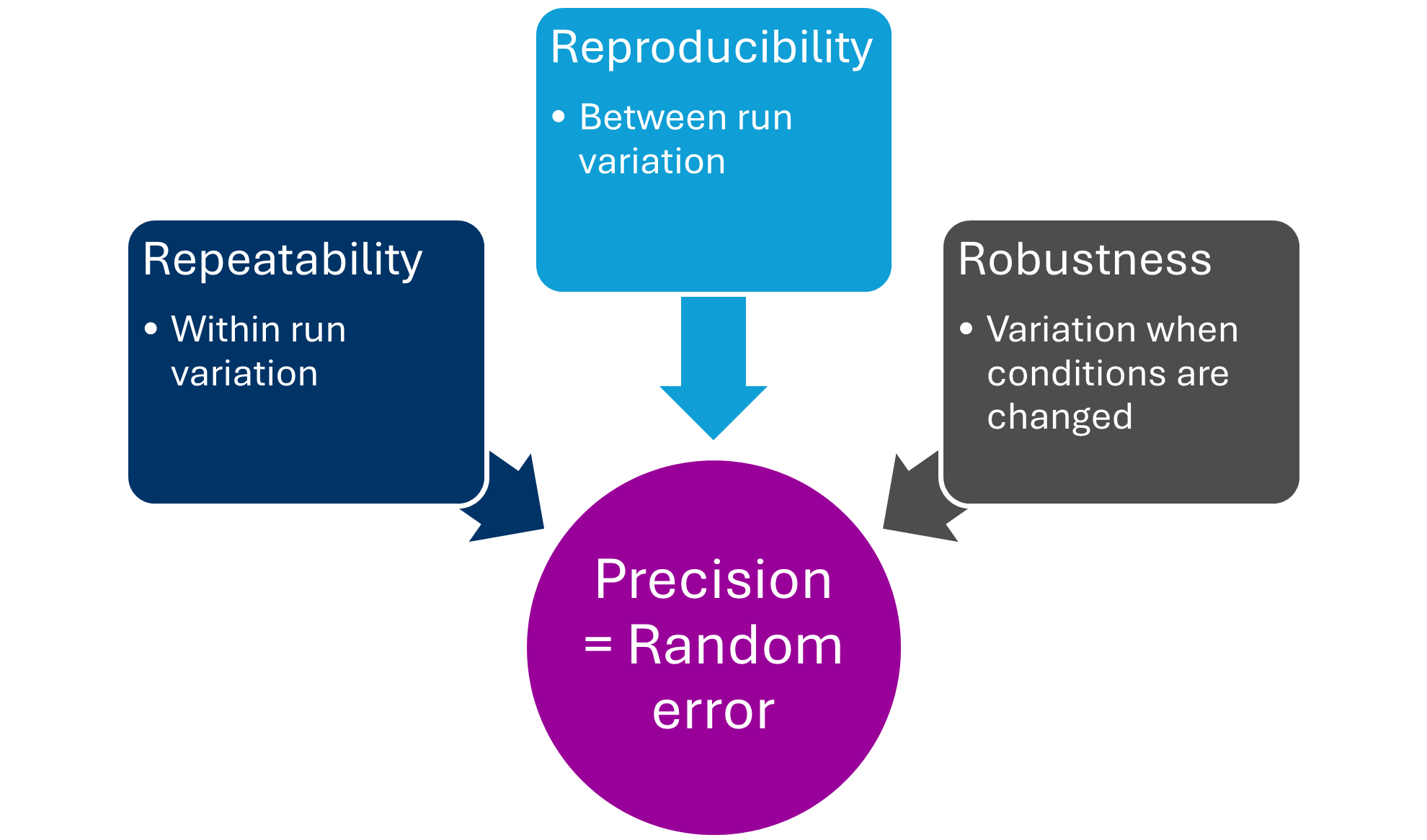

These characteristics are interrelated and often addressed together. For example, precision is the closeness of agreement between values obtained by replicate measurements on the same or similar objects under specified conditions. Precision represents random error, and random error is often not possible to correct, so it must be investigated during validation. It is usually expressed as a standard deviation of coefficient of variation, and can be assessed by investigating repeatability, reproducibility and robustness.

After data is generated, it needs to be summarised into a clear and concise report. It is a good idea to have a report template so that all test validations are consistent across the organisation. Treat the report as a product you are preparing for presentation – because it is. This is what assessors and auditors will look at, and they need to have all the information available to see “that the extent of validation of an examination method is sufficient to ensure the validity of results” (ISO15189). However, the information needs to be presented in a user-friendly way, without overloading the report with excessive text or raw data. All data needs to be retained, but can be included as an appendix, while summary tables are provided in the body of the report. Treat the report as you would a publication in a scientific journal, where there are structure and word limits. Figures and tables are encouraged but need to be explained, and flowcharts can assist in describing complex processes and workflows. It is also a great idea to have a summary table at the start of the report to outline all critical and pertinent information that can be easily reviewed at a glance. If an auditor does not have to search for information, then they are less likely to find things they don’t like during the search!

It should be considered to include the following in the final report:

Intended use

Requirements and expected performance (if applicable)

Requirements must be met for the test to pass (i.e. minimum acceptable values for performance criteria)

Expected performance is less rigid and is a guide only, for example, you may expect the test to detect all known genotypes of the pathogen, if it doesn’t it is not a fail, but may be a limitation

Define sample type and acceptance/rejection criteria

Recommended reagents/instruments

Reference sequences used (for any genomics test)

Specimens tested - represent each of the reportable results, or span the range of results

Description of methodology

Internal SOP and external references

Controls, standards, calibrators used

Details of reference method

Investigation of appropriate analytical performance characteristics

Define normal range/reportable range

Data interpretation

Limitations

Descriptions of failure and re-examination is required

Result reporting guidelines

Explicit acceptance/rejection of validation – has criteria been met?

Investigating and senior scientist sign-off

Director sign-off

The documented plan as an appendix

Checklist for implementation

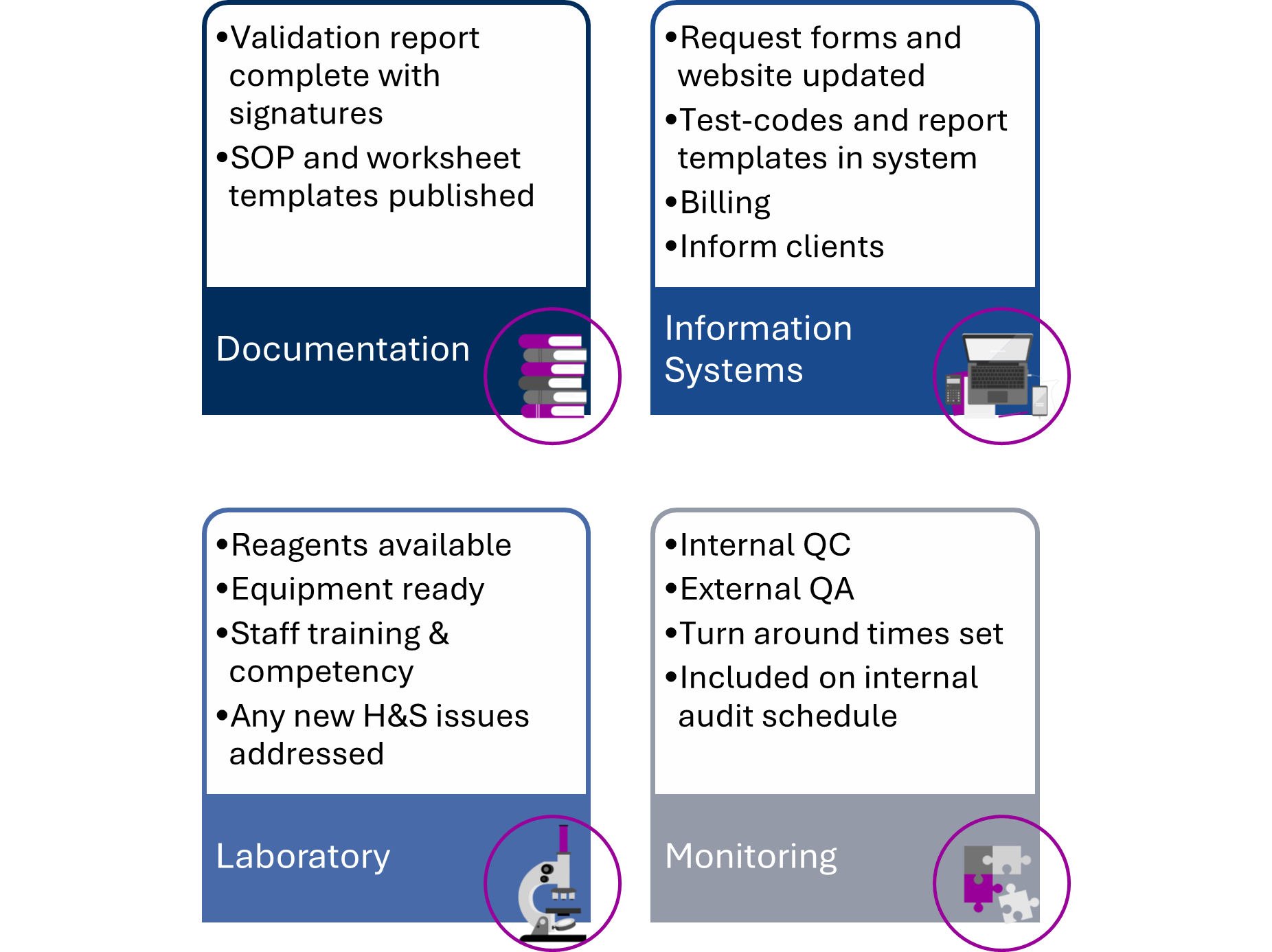

Following report approval, the test will need to be incorporated into the workflow of the laboratory. It is recommended to have an implementation checklist at the end of the report template to ensure all factors are considered (see graphic below). As a part of the implementation stage, you may also be seeking accreditation for the test to be added to your scope. Assessors will wish to see most of what is outlined during this step, such as training and competency records, an internal published procedure, QAP enrolment and equipment maintenance.

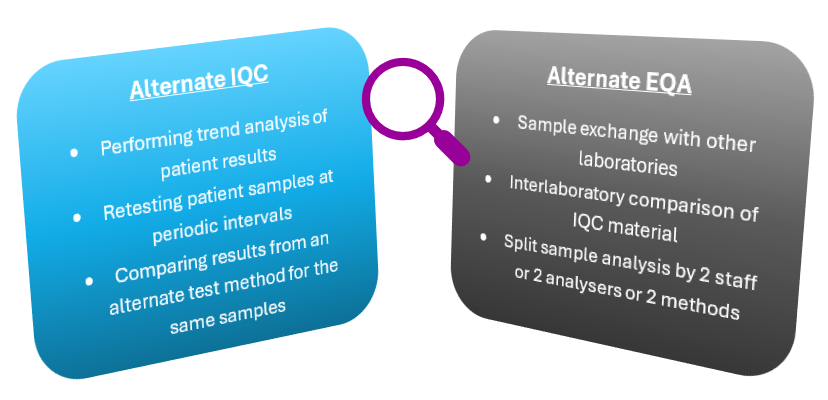

The final stage of a test validation is the stage that is never complete while the test is in use, ongoing performance evaluation. It is important that these items are set up during implementation, so that it is clear how the laboratory intends to “ensure the validity of examination results” (ISO15189, clause 7.3.7). It is a requirement that data is recorded and monitored in a way that trends (and shifts in trends) are detectable. Internal quality control (IQC) monitors the day-to-day test quality (within laboratory variation) and external quality assurance (EQA) periodically monitors between-laboratory variation. If appropriate traditional IQC and/or EQA are not available for a test, then an alternative way to monitor the tests must be used.

Following a structured best-practise approach to test validation in medical testing laboratories will aid in compliance with standards such as ISO15189. Clear processes will provide laboratory staff with confidence to perform test validations and present their data in concise reports that are audit ready.

If you would like Biorisk Busters to help train your team in this structured best-practise approach to test validation, then please reach out to us. We can offer a one-day course that can be delivered in-house at your organisation or online for your convenience, complete with bespoke examples and activities to ensure relevance to you. Let’s share excellence!

Recommended reading:

International Organization for Standardization. (2022). Medical Laboratories—Requirements for quality and competence (ISO Standard No. 15189:2022).

National Pathology Accreditation Advisory Council. (2025). Requirements for the development and use of in-house in vitro diagnostic medical devices, 5th Ed.

Jennings L, Van Deerlin VM, Gulley ML; College of American Pathologists Molecular Pathology Resource Committee. Recommended principles and practices for validating clinical molecular pathology tests. Arch Pathol Lab Med. 2009 May;133(5):743-55. doi: 10.5858/133.5.743. PMID: 19415949.

Mattocks, C., Morris, M., Matthijs, G. et al. A standardized framework for the validation and verification of clinical molecular genetic tests. Eur J Hum Genet18, 1276–1288 (2010). https://doi.org/10.1038/ejhg.2010.101

Wallace P, McCulloch E. Quality Assurance in the Clinical Virology Laboratory. Encyclopedia of Virology. 2021:64–81. doi: 10.1016/B978-0-12-814515-9.00132-6. Epub 2021 Mar 1. PMCID: PMC7917444.